Aren’t we done with games yet? Some would say that while games were useful for AI research for a while, our algorithms have mastered them now, and it is time to move to real problems in the real world. I would say that AI has barely gotten started with games, and we are more likely to be done with the real world before we are done with games.

I’m sure you think you’ve heard this one before. Both reinforcement learning and tree search were largely developed in the context of board games. Adversarial tree search took significant steps forward because we wanted our programs to play Chess better, and for more than a decade, TD-Gammon, Tesauro’s 1992 Backgammon player, was the only good example of reinforcement learning being good at something. Later on, the game Go catalyzed the development of Monte Carlo Tree Search. A little later still, simple video games like those made for the old Atari VCS helped us make reinforcement learning work with deep networks. By pushing those methods hard and sacrificing immense amounts of computing to the almighty Gradient, we could teach these networks to play complex games such as DoTA and StarCraft. But then it turns out that networks trained to play a video game aren’t necessarily any good at doing any tasks that are not playing video games. Even worse, they aren’t even good at playing another video game, another level of the same game, or the same level with slight visual distortions. Sad, really. Many ideas have been proposed for improving this situation, but progress is slow going. And that’s where we are.

As I said, that’s not the story I’m going to tell here. I’ve said it before, at length. Also, I just told it briefly above.

It’s not controversial to say that the most impressive results in AI from the last few years have not come from reinforcement learning or tree search. Instead, they have come from self-supervised learning. Large language models, which are trained to do something as simple as predicting the next word (okay, technically the next token) given some text, have proven incredibly capable. Not only can they write prose in a wide variety of different styles, but also answer factual questions, translate between languages, impersonate your imaginary childhood friends, and many other things they were not trained for. It’s pretty impressive, and we’re not sure what’s going on more than that the Gradient and the Data did it. Of course, learning to predict the next word is an idea that goes back at least to Shannon in the 1940s, but what changed was scale: more data, more computing power, and bigger and better networks. In a parallel development, unsupervised learning on images has advanced from barely being able to generate generic, blurry faces to creating high-quality, high-resolution illustrations of arbitrary prompts in arbitrary styles. Most people could not produce a photorealistic picture of a wolf made from spaghetti, but DALL-E 2 presumably could. A big part of this is the progression in methods from autoencoders to GANs to diffusion models, but an arguably more important reason for this progress is the use of slightly obscene amounts of data and computing.

As impressive as progress in language and image generation is, these modalities are not grounded in actions in a world. We describe the words, and we do things with words. (I take an action when I ask you to pass me the sugar, and you react to this, for example, by passing the sugar.) Still, GPT-3 and its ilk do not have a way to relate what it says to actions and their consequences in the world. In fact, it does not really have a way of relating to the world at all; instead, it says things that “sound good” (are probable next words). If what a language model says happens to be factually true about the world, that’s a side effect of its aesthetics (likelihood estimates). And to say that current language models are fuzzy about the truth is a bit of an understatement; recently, I asked GPT-3 to generate biographies of me, and they are typically a mix of some verifiably true statements (“Togelius is a leading game AI researcher”) with plenty of plausible-sounding but untrue statements such as that I was born in 1981 or that I’m a professor at the University of Sussex. Some of these false statements are flattering, such as that I invented AlphaGo, and others less flattering, such as that I’m from Stockholm.

We have come to the point in any self-respecting blog post about AI where we ask what intelligence is, really. And really, it is about being an agent that acts in a world of some kind. The more intelligent the agent is, the more “successful” or “adaptive” or something like that the acting should be relative to a world or a set of environments in a world.

Now, language models like GPT-3 and image generators like DALL-E 2 are not agents in any meaningful sense of the word. They did not learn in a world; they have no environments they are adapted to. Sure, you can twist the definition of agent and environment to say that GPT-3 acts when it produces text and its environment is the training algorithm and data. But the words it produces do not have meaning in that “world.” A pure language model never has to learn what its words mean because it never acts or observes consequences in the world from which those words derive meaning. GPT-3 can’t help lying because it has no skin in the game. I have no worries about a language model or an image generator taking over the world, because they don’t know how to do anything.

Let’s go back to talking about games. (I say this often.) For some types of games, reinforcement learning has not been demonstrated to work. Sure, tree search poses unreasonable demands on its environments (fast-forward models), and reinforcement learning is inefficient and has a terrible tendency to overfit so that after spending huge compute resources, you end up with a clever but oh-so-brittle model. Imagine training a language model like GPT-3 with reinforcement learning and some text quality-based reward function; it would be possible, but I’ll see you in 2146 when it finishes training.

But what games have got going for them is that they are about taking action in a world and learning from the effects of the actions. Not necessarily the same world that we live most of our lives in, but often something close to that, and always a world that makes sense for us (because the games are made for us to play). Also, there is an enormous variety among those worlds and their environments. If you think that all games are arcade games from the eighties or first-person shooters where you fight demons, you need to educate yourself, preferably by playing more games. There are games (or whatever you want to call them, interactive experiences?) where you run farms, plot romantic intrigues, unpack boxes to learn about someone’s life, cook food, build empires, dance, take a hike, or work in pizza parlors—taking some examples from the top of my head. Think of an activity that humans do with some regularity, and I’m sure someone has made a game that represents this activity at some level of abstraction. And in fact, many activities and situations in games do not exist (or are very rare) in the real world. As more of our lives move into virtual domains, the affordances, and intricacies of these worlds will only multiply. The ingenious mechanism that creates more relevant worlds to learn to act in is the creativity of human game designers; because originality is rewarded (at least in some game design communities), designers compete to come up with new situations and procedures to make games out of.

Awesome. Now, how could we use this immense variety of worlds, environments, and tasks to learn more general intelligence that is truly agentic? If tree search and reinforcement learning are not enough to do this on their own, is there a way we could leverage the power of unsupervised learning on massive datasets for this?

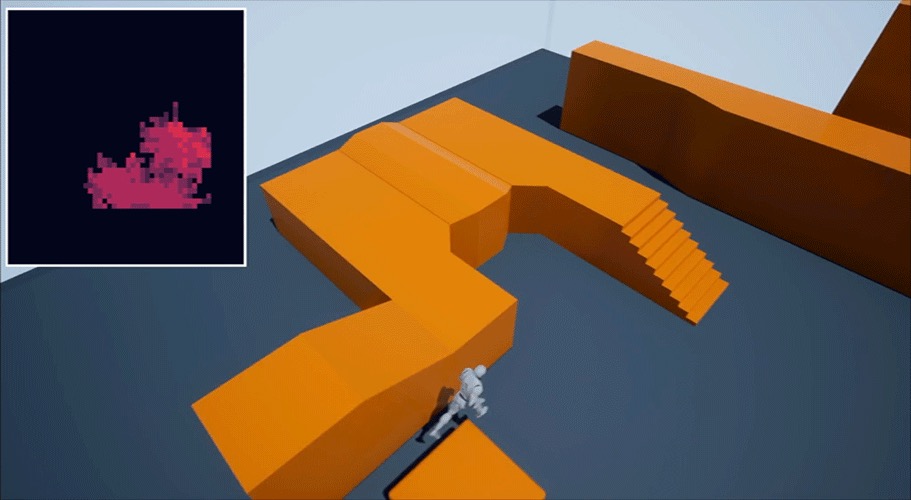

Yes, there is. But this requires a shift in mindset: we are going to learn as-general-as-we-can artificial intelligence not only from games but also from gamers. Because while there are many games out there, there are even more gamers. Billions of them! My proposition here is simple: train enormous neural networks to learn to predict the next action given an observation of a game state (or perhaps a sequence of several previous game states). This is essentially what the player is doing when watching the screen of a game and manipulating a controller, mouse, or keyboard to play it. It is also a close analog of training a large language model on a vast variety of different types of human-written text. And while the state observation from most games is mainly visual, we know from GANs and diffusion models that self-supervised learning can work very effectively on image data.

So, if we manage to train deep learning models that take descriptions of game states as inputs and produce actions as output (analogously to a model that takes a text as input and produces a new word, or takes an image as input and produces a description), what does this get us? To paraphrase a famous philosopher, the foundation models have described the world, but the behavior foundation models will change it. The output will be actions situated in a world of sorts, which differs from text and images.

I don’t want to give the impression that I believe that this would “solve intelligence”; intelligence is not that kind of “problem”. But I believe that behavior foundation models trained on a large variety (and volume) of gameplay traces would help us learn much about intelligence, particularly if we see intelligence as adaptive behavior. It would also almost certainly give us models that would be useful for robotics and all kinds of other tasks that involve controlling embodied agents, including, of course, video games.

I think the main reason that this has not already been done is that the people who would do it don’t have access to the data. Most modern video games “phone home” to some extent, meaning that they send data about their players to the developers. This data is mostly used to understand how their games are played, as well as balancing and bug fixing. The extent and nature of this data vary widely, with some games mostly sending session information (when did you start and finish playing, which levels did you play) and others sending much more detailed data. It is probably very rare to log data at the level of detail we would need to train foundation models of behavior, but certainly possible and almost certainly already done by some game. The problem is that game development companies tend to be extremely protective about this data, as they see it as business-critical.

There are some datasets available out there to start with, for example, one used to learn from demonstrations in CounterStrike (CS:GO). Other efforts, including some I’ve been involved in myself, used much less data. However, to train these models properly, you would probably need very large amounts of data from many different games. We would need a Common Crawl or at least an ImageNet of game behavior. (There is a Game Trace Archive, which could be seen as a first step.)

There are many other things that need to be worked out as well. What are the inputs – pixels, or something more clever? How frequent does the data capture need to be? And output also differs somewhat between games (except for consoles, which use standardized controllers and conventions) – should there be some intermediate representations? And, of course, there’s the question of what kind of neural architecture would best support these kinds of models.

Depending on how you plan to use these models, there are some ethical considerations. One is that we would be building on lots of information that players are giving by playing games. This is, of course already happening, but most people are not aware that some real-world characteristics of people are predictable from play traces. As the behavior exhibited by trained models would not be any particular person’s playstyle, and we are not interested in identifiable behavior, this may be less of a concern. Another thing to think about is what kind of behavior these models will learn from game traces, given that the default verb in many games is “shoot”. And while a large portion of the world’s population play video games, the demographics is still skewed. It will be interesting to study what the equivalent of conditional inputs or prompting will be for foundation models of behavior, allowing us to control the output of these models.

Personally, I think this is the most promising road not yet taken to more general AI. Both in my academic role as head of the NYU Game Innovation Lab and as research director at our game AI startup modl.ai, where we plan to use foundation models to enable game agents and game testing. If anyone reading this has a large dataset of game behavior and wants to collaborate, please shoot me an email! Or, if you have a game with players and want modl.ai to help you instrument it to collect data to build such models (which you could use), we’re all ears! I’m ready to get started.

PS. Yesterday, as I was revising this blog post, DeepMind released Gato, a huge transformer network that (among many other things) can play a variety of Atari games based on training on thousands of playtraces. My first thought was, “damn! they already did more or less what I was planning to do!”. But, impressive as the results are, that agent is still trained on relatively few playtraces from a handful of dissimilar games of limited complexity. There are many games in the world that have millions of daily players, and there are millions of games available across the major app stores. Atari VCS games are some of the simplest video games there are, both in terms of visual representation and mechanical and strategic complexity. So, while Gato is a welcome step forward, the real work is ahead of us!

Thanks to those who read a draft of this post and helped improve it: M Charity, Aaron Dharna, Sam Earle, Maria Edwards, Christoffer Holmgård, Ahmed Khalifa, Sebastian Risi, Graham Todd, Georgios Yannakakis, Michael Green.